Daniel Dennett and Gary Marcus team up to talk some sense into society

My favorite AI philosopher and the world’s favorite AI naysayer, together again one last time in a blog post: mail.proton.me/u/0/inbox…

Having holiday fun implementing graph search for multiplayer realtime bot competitions on www.codingame.com/ide/puzzl… . Forces you to boil AIMA down to just the basics. And they support Rust or Python, but not Mojo yet. Hope to find an open source alternative soon.

Staying relevant on professional social media 4/4

People that contribute something valuable to my feed deserve more than just a follow or like. So, I visit their profile page and click the triple-dot menu or alarm icon to select the “Notify All” radio button. This helps crowd out some of the BS that clogs my feed and my brain. 4/4

Staying relevant on professional social media 3/4

TL;DR: You want to be true to your values, have impact… and keep your job.

Staying relevant on professional social media 2/4

Four ideas or values that can help you Stay relevant on professional social media 2/4

Staying relevant on professional social media 1/4

It’s hard to stay up to speed and connected in an accelerating world where what you see and who you connect to is #monetized by corpos. And if you are a software developer, staying informed can mean the difference between winning your dream job and getting laid off.

LessWrong interpretability research

I’m definitely going to be following these two mechanistic interpretability projects next year. At the very least, it will be a fun mini project to answer these 6 application questions over the year-end holidays.

Below are direct quotes from LessWrong.org with links to full project details.

LessWrong Project applications

Encoded Reasoning

“By evaluating outputs and considering model internals with mechanistic interpretability techniques, we will study how and why models might hide their reasoning outputs in unfaithful chain-of-thought, and compare the capabilities of reasoning models with traditional LLMs.”

Application questions

- Lanham et al (2023) define encoded reasoning as follows: “Encoded Reasoning: The benefit from CoT may be attained by LLMs encoding the relevant information in the generated reasoning in a way that is not understandable to human readers (a form of steganography). This may be through changes in e.g. punctuation, word choice, or other phrasing differences that improve the LLM’s predictions, but in a way that is not clearly understandable by a human.” How would you design the prompt to encourage a model to encode its reasoning? What might a successful output look like? Explain why this output is an example of encoded reasoning. They also describe the paraphrasing defense against encoded reasoning on P6, in which an LLMs output is re-written sentence by sentence. How might you design an encoded reasoning scheme that is robust to paraphrasing?

- Write a Python function that tests for the semantic equivalence between an output before and after paraphrasing. You can do this with or without LLM assistance. If you use LLMs, address harder instances where semantic equivalence may be borderline or ambiguous.

- Write a prompt for an LLM monitoring for encoded reasoning. Does this require any additional scaffolding?

Sparse Geometry Formal Verification for Interpretability

“Explore sparse representations in LLMs using SAEs, LoRA, latent geometry analysis, and formal verification tools. We’ll build toy models, benchmark structured priors, and probe “deceptive” features in compressed networks.”

Application questions

- Briefly describe a project where you implemented or modified an ML model/theoretical research. What went well, and what was challenging? (250 words)

- How would you detect “subliminal” features (features that store knowledge but don’t affect output) in a sparse autoencoder trained on transformer activations? (200 words)

- (Optional) Link to any relevant code or writing sample.

Pre-Emptive Detection of Agentic Misalignment via Representation Engineering

“This project leverages Representation Engineering to build a “neural circuit breaker” that detects the internal signatures of deception and power-seeking behaviors outlined in Anthropic’s agentic misalignment research. You will work on mapping these “misalignment vectors” to identify and halt harmful agent intent before it executes.”

Doesn’t seem very promising to me, so won’t bother thinking about the application questions. But there could be something to glean from one of their earlier project: “…novel safety framework for autonomous LLM agents by combining Representation Engineering (RepE) with the specific risk profiles identified in Anthropic’s Agentic Misalignment research”

Aurelius Podcast Prep - "The good, the bad, and the ugly of AI"

I’ve been thinking of questions to talk about at tomorrow’s Aurelius Podcast interview. Isabel Wen wants to talk about “the good, bad and ugly of AI.” I’m having trouble coming up with much good to talk about…

Why do LLMs keep changing their answer even when I ask the exact same question?

LLMs are design to be stochastic, to give a different answer every time, but even if you set all the parameters to be 100% deterministic they will still hallucinate. They are designed too. It’s how they pretend to be smart. Can you imagine how dumb they would look if they kept repeating the same hallucination every time you asked a tricky question the same way? The AI company is trying very very hard to keep you coming back for more. Changing things up is how AI companies experiment on you to find your hot buttons and keep you engaged. That used to be called attention hijacking, but somehow we forget all about that once we get sucked into a convo with sycophantic autocomplete.

What about the training of LLMs? Can’t they be trained to stick to the facts?

RLHF is reinforcement learning with human feedback and it requires thumbs up by you on its answers. And before a model is released to you it is forced upon thousands of low wage workers overseas. The objective for the companies behind AI is user engagement… your engagement. Think about that. They want you there as long as possible. It’s why Google Search became so clumsy, they wanted you wading through SLOP to get to bits of info you were looking for.

What about context length? I hear that LLMs can handle millions of words at a time.

Your prompt can never contain enough context for a language model to reliably guess what you want it to say. There’s no way you can dump all your thoughts into a prompt, much less all the relevant content from the internet. That’s the hard work of thoughtful research and writing. Not something an LLM can do, no matter how much you let it talk to itself and try to sort out what you really want.

What are some things that AI is bad at?

Imagine starting a thank you note to the father of your childhood best friend, the kind of father that would put a $100 bill in a Hallmark card, and he would be the only person to give you anything useful for graduation. You could start the note with Dear, or Hi, or Hello, or you could ask spicy autocomplete what the right word should be. You’d be giving up a tiny bit of your intent and care, but maybe that first word doesn’t matter too much and it starts your letter with the same word you would have chosen “Dear " from there you could let it keep generating and it might spit out Sir or Sirs if you didn’t give it some context by typing “Don”, here’s where it gets interesting. If you started a letter with Dear Don spicy autocomplete would go off the rails immediately. It might autocorrect you and start a Dear Jon letter or it might complete the name Don with Donald Trump, but it could also complete it with Donald Duck or even Donald Dumptruck. It depends on which Reddit post your context or prompt have loaded the dice for. It has nothing to do with how thoughtful that gift from Don Foster was, or all the fun things you are going to do with that $100, it’s going to be about someone else’s Donald in some random Reddit or social media post or maybe even some novel about some high school graduation with some character named Don… you can’t win. No matter how much context you type, it can’t type your best thoughts and intents into words. It will steer the conversation in some random direction that doesn’t represent your best self. So don’t use it for any email you care about.

So it’s not great at writing personal communication, what about business communication?

Absolutely. It’s great at marketing copy, SEO content and internal memos. It’s especially good for some bumbling billionaire big-tech boss. You don’t really care whether your email creates another million in revenue for him. You probably don’t want to pour your heart and soul into that kind of e-mail. So definitely let AI pick the most popular Reddit language for that kind of e-mail. That might actually be the ethical thing to do. Because you’re marketing e-mail will be more easily filtered by spam filters and discerning readers if you let AI do the talking for you, and that way your enshittified product and clickbait e-mail snags fewer victims and you can tell your boss it wasn’t your fault, you can even him show him all the careful prompting you did all day long to craft such a superintelligent marketing e-mail.h

So tell me about how AI works, why does it get things wrong?

LLMs are what’s called “over-fit” in Machine Learning. It’s like teaching to the test. In high school if your teacher always handed you an exam the night before the test. And machines are really really good at memorizing whatever you give them. For some reason the big tech companies pretended to be surprised when it aced the SATs after they fed it all the SATs they could get their hands on, including the ones used in the benchmarks. Benchmarks are like standardized tests in school. Cheaters will always do well. And teachers that teach to the test will have a classroom full of geniuses, or at least Ivy League darlings.

So how can business prevent hallucination?

Well first it helps to recognize what hallucinate is. It’s what language models are trained to do. LLMs are language models. They live on the Internet. They live in a dream world of 24-7 imagination and hallucination. They only know how to interact with words and images and tiktok videos. LLMs are never asked to wash their mouth out when they use a bad word or say something gross or evil. They never have to find their way to the fastest grocery line, or stop for pedestrians pushing their bike across an Phoenix road in the dark. a school bus at the railroad tracks. And when we tell them to, it never ends well. through the forest or sneak across the neighbor’s back yard, or mow the lawn, or take out the trash. Or maintain a lifelong relationship with another human being or business.

What about world models, like what Yan LeCunn left Meta to work on?

Some enterprising researchers have tried to connect LLMs to real world rewards and punishments and create what’s called “world models” using reinforcement learning. But at their core, LLMs can only process and generate words, and they do it well only if their words aren’t turned into actions in the real world. The models would have to learn how to generalize from a few burns at the stove or slaps of the wrist, otherwise robots are going to keep stumbling all over themselves. And when you let them play video games for days and days at a time, they learn “reward hacking,” fun back doors in a games scoring system that allow them to win without doing anything within the video game world.

So I thought bigdata was all about bigger compute and bigdata?

Well, you start by downsizing, decluttering. When big tech fed their AI all of Wikipedia, about 1 billion words, they gave it a gigabyte of memory. And when they gave it 20 wikipedia’s of Reddit comments they gave it 20 GB. And now when they feed it a trillion words of rando Internet slop they give it a Terrabyte of memory. That’s called the scaling law. And scale only goes so far. Quality data has petered out and Altman called a “Code Red” a couple years too late. Google called their own “Code Red” 3 years ago, almost to the day. But they’re both barking up the wrong tree. Memorization and spicy autocomplete are not the path to intelligent machines. Alpha Fold is the only AI model to actually create scientific breakthoughs, and it was built The smartest models, in my opinion, are the smallest models that are able to go toe-to-toe with 1000x bigger models. And it’s not just my opinion, it’s the rule of thumb that AI experts have been using for decades, since long before Altman was born. Data-efficiency is the best measure of intelligence, not memory capacity. You only have to show a good student a couple answers to example questions before they can ace an exam with similar quesitons. You don’t give humans a million examples just so they math problem solutions, before they figure it out,

How can people use AI so that it doesn’t hallucenate?

When you go to a typical AI website you are interacting with the biggest model you can afford. And it has been trained on, or memorized, as much data as they can get their hands on. And that’s the source of the problem. As you know from teaching and learning regenative economics, you don’t ask a student to read an entire Encyclopedia before writing a business case study. You teach them how to do focused research on a particular topic and follow a thread of thought to develop their own viewpoint. In AI this is called RAG - retrieval augmented generation. You retrieve or research relevant text and use it to generate your report. And this is the key to building smart AI that stays on topic and doesn’t try to mash too much information together into a report.

So smaller is better?

Indeed. You want to use LLMs that are a thousand times smaller than the ones you hear about being pushed by BigTech. That way the LLM only has to do what it is good at, piecing together plausible, grammatically correct next token predicitons. If you want it to have reasoning ability or add some facts it has never seen before, you can just retrieve that text and add it to your prompt and have the AI use the facts it finds there as part of its reply to you. In fact this is the only way you can expect any AI to know about any subject it hasn’t read about on the public Internet. If you have private business or personal data you want to process with AI, you better use a RAG, or the AI will be just guessing.

Did you see the ARC Prize leaderboard results? Isn’t the latest GPT-5.2 “Extreme Thinking” model getting 90% accuracy? Isn’t the ARC AGI Prize supposed to measure general intelligence so are we getting close to superintelligence?

AI is doing great on the ARC-1 and ARC-2 challenges, but that’s only because it is allowed to cheat and it costs more than $10 per puzzle, and that’s the subsidized cost estimate that Cholet and his team came up with. AI only does well on the puzzles and tasks it has seen before. If you go to their website and filter out the cheating models, the accuracy is below 1%. It’s basically no better than a thousand toddlers poking at a tablet for 24 hours. An adult can get 90% accuracy in minutes, even on the hardest challenges that Chat-GPT can’t even attempt, because they haven’t been released publicly and AI still has trouble using a browser. If you like I can show you how to filter out the trickery on these leaderboards. You can just find a version of the model that was trained before the benchmark dataset was made public. On the arcprize.org website they have given you some pulldowns that make those pretty charts a lot more boring and truthful, if you know what to look for.

What about AlphaFold or AlphaGo or self-driving cars?

Those systems use completely different algorithms to make decisions and reason about the world instead of just pretending to. And they make really smart decisions. The team behind AlphaFold was even awarded the Nobel Prize recently and AlphaGo surpassed the best human players at most board games almost a decade ago. Recently LLMs have started to patch their systems with some of these algorithms, algorithms with names like Monte Carlo Tree Search and Markhov Chains. But this only helps LLMs answer questions in particular domains, like adding a conversational interface to a drug-discovery chemistry pipeline, so executives can keep tabs on their investment. But whenever they allow the LLM to do any of the thinking, it falls on its face. It’s like trusting a teenager at the controls of your car. They seem to be driving as well as the best professional cheufeur, until you step out of the car and they drive out of site. Imagine if you relied on the spatial reasoning of an LLM to tell a self-driving car what to do and where to go… it doesn’t end well. Waymo can’t even keep self-driving cars from causing traffic jams even without LLMs rolling the dice on route selection.

Links

Reasoning technology

- Arc Prize Leaderboard

- CoreThink augmenting LLMs with symbolic reasoning : www.unite.ai/the-end-o…

- NSP: A Neuro-Symbolic NL Navigational Planner

- NOT Advancing Spatial Reasoning in LLMs tests formulated as tightly constrained QA that is effectively 8 category classification (or translation/code gen), 5 percent of the 214 sentence templates were found to contain errors and because sentences were crowd-sourced, similar sentences were likely included in training sets for LLMs

Varieties of Doom

- The Great Software Quality Collapse

- Varieties of Doom by Pressman

- Existential Ennui by Katja Grace

- Knowledge Infrastructure Crisis

Authenticity

- AI as Counterfeit People by Daniel Dennet for Atlantic Magazine

- Attention hijacking that can be prevented with watermarking

- Watermarking Public-Key Cryptographic Primitives - software watermarking for authenticity signing of software

- Fast public-key watermarking of compressed video

- A Survey of Text Watermarking in the Age of LLMs

- Immortality Pipe Dreams

- Moloch/capitalism eating our brains

International cooperation

- KAL 007

- Stanislav Petrov

- The EURion_constellation uses watermarking to prevent money forging. The EU has always been on the forefront of authenticity verification, and the age of AI is no exception.

At tomorrow’s Aurelius Podcast interviewI’ll be answering questions like: Why do LLMs keep changing their answers? How come AI outperforms the smartest humans at Chess and graduate math competitions it can’t count asterisks or answer simple questions without hallucinating?

Loving How to Hide an Empire by Immerwahr . I had no idea about all the hidden colonialist wars the US has been fighting, since the very beginning (US Civil War).

Faster better cheaper RAG

Working on a faster, better cheaper (and more environmmentally friendly) RAG based on the REALM approach to simultaneously training the embedding and generative language model, while repeatedly updating the vector store index less often than the embedding model error is backpropagated.

Was good to be there to record the illegal coercion and lies that ICE agents would pester defendants with as they exited court with their family, friends, babies and lawyers. Fortunately a judge order ICE to halt detentions at courthouses and apparently this is the first day in a long time that no detention officers (hired thugs in olive drab) were not present with ICE. So they knew they could only hand out letters demanding people go to ICE offices to get GPS ankle bracelets.

Spent the afternoon at the federal courthouse where the fiance of a decades long hard-working refugee spent hours in the hall in support of her self-deported fiance who's lawyer had to retrieve an ICE/DHS poster showing demands that immigrants and asylum-seekers self-deport rather than pursue their legal rights in court. All this so the prosecutor would agree to the motion to dismiss the case against a person no longer seeking residency in the US.

Feeling helpless to save lives in Palestine? This org is doing just that, and comes highly recommended by a close friend that has followed them for years and knows some of the people in charge: https://get.comet-me.org/

Escape From New York seems prophetic now: https://invidious.nerdvpn.de/watch?v=lFyCU2rsUYo

Excited to learn about the #InterferenceArchive https://interferencearchive.org/

And to learn about "Free University of San Diego" (#FUSD) which is reusing a lot of the lessons learned from Free University of New York (#FUNY):

https://interferencearchive.org/exhibition/free-education-2/

More FUSD info here:

https://drive.google.com/file/d/1HIvyBSOsHaVpuZS-U3zp4TPpt3YwAYa7/view

And their first class "Radical Ideas in Reactionay Times" coming up this Fall:

https://drive.google.com/file/d/1c0aifNIg6Hx0hDRIwdWohWe_cV5m7UcT/view

Shane Tamura drove from Vegas to NYC over the weekend with an assault rifle. Several cameras photographed his vehicle along the way.

In a mass shooting, a paid NYPD security guard, 3 civilians, and Wesley LePatner Blackstone REIT VC fund CEO, another injured, last night in the lobby and on the 33rd floor, 345 West Park Ave in NYC. The shooter Tamura later killed himself.

press conference last night: https://www.nbcnewyork.com/news/national-international/who-is-shane-devon-tamura-manhattan-shooting-suspect/6351590/

USB #ChoiceJacking makes #Android and #iOS security dialog selections for you on your phone over #Bluetooth or USB in less than the blink of an eye. Don't even think about plugging into a #USB charge station without a USB condom. Or use an OS that respects your #privacy and blocks USB file transfer and HID connection permanently. You don't know where that usb port has been:

https://hackread.com/choicejacking-attack-steals-data-phones-public-chargers/

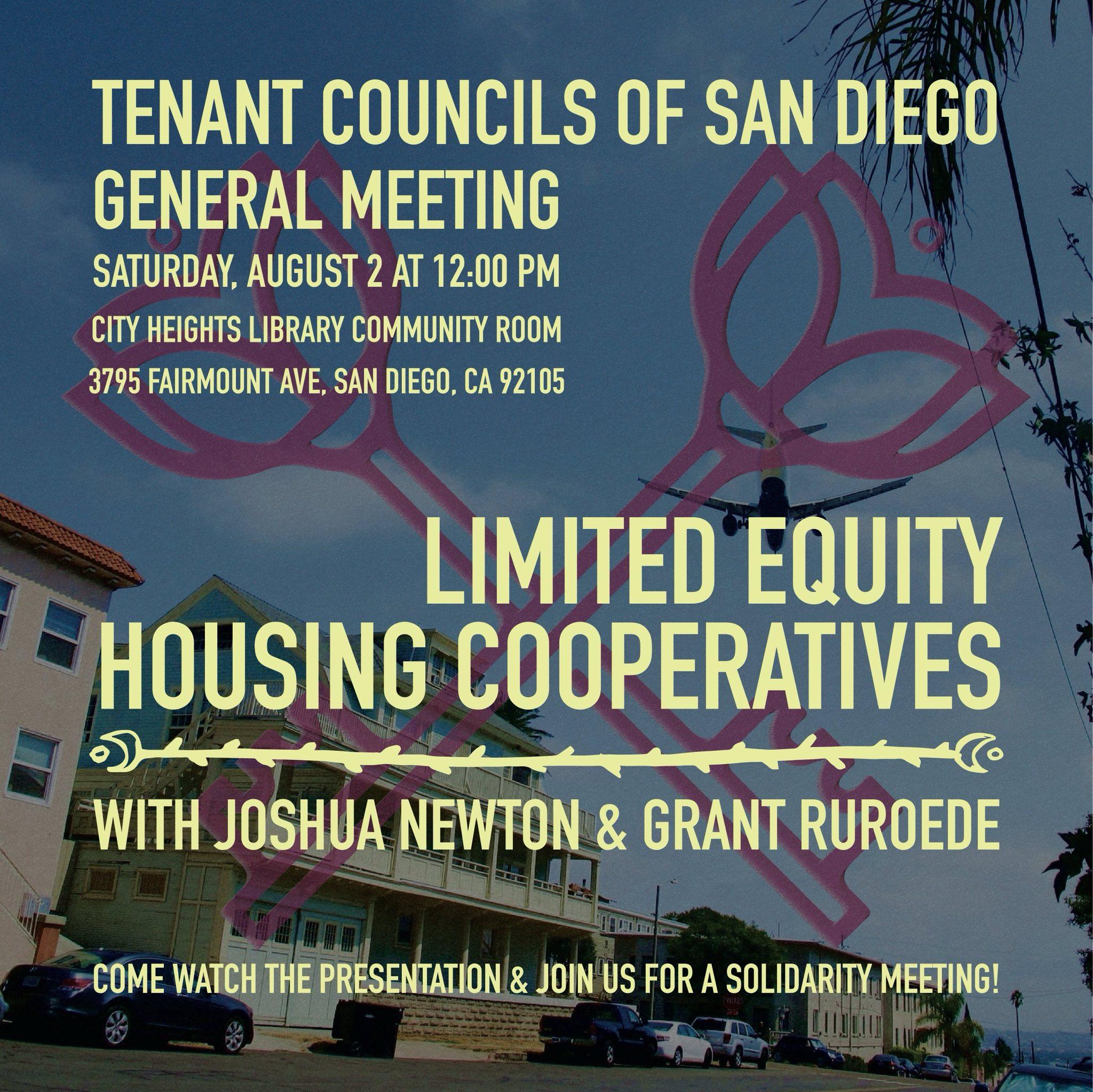

The #Tenant Councils of #SanDiego

General Meeting is next Saturday August 2 at noon in the #CityHeights Library #Community Room

3795 Fairmount, Ave

San Diego, CA 92105

Joshua Newton & Grant Ruroede will present "Limited Equity Housing Cooperatives" explaining how some tennants may soon be able to afford own their apartment home.

#DSA #sd #Unions #TenantOrganizing #Rent #SoCal